amplifying vulnerabilities with AI

amplifying vulnerabilities with AI

Despite the title, this is not a post about a new exploitation technique, an exotic zero-day, or a hacking method you can try at your next CTF. It’s an invitation to rethink how we manage risk in an era where attackers no longer need to exploit your zero-day — a well-crafted headline and an AI with good prose is enough.

This happens more often than we’d like to admit. A company can have up-to-date patching, MFA everywhere, a 24/7 SOC, ISO 27001, SOC 2, whatever you want. And still lose hundreds of millions in brand value within 72 hours. The reason? The modern attacker no longer compromises servers. They compromise the narrative.

And the narrative, today, is scalable.

The experiment that got me thinking

Last year, a team from Ludwig Maximilian University of Munich published something (Kennedy, Parker, Liu, and Schütze) that kept me thinking for weeks. They took the same news story and fed it into 9 LLM models with four different instructions: faithful to the original, centrist, left-leaning, and right-leaning.

Result: with a single prompt, the models generate markedly biased versions of the same fact. The technique works.

And if it works for political framing, it works for hostile narratives against a brand. Same mechanics, different vector.

That was the spark. If an AI can rewrite a fact with whatever slant you ask for, what stops it from rewriting a trivial finding as a security crisis? Spoiler: nothing.

Being secure and appearing secure are not the same thing

Here’s a distinction that most organizations never make explicit.

Being secure is what the CISO reports to the board. Patches, controls, MFA, monitoring. Measured by the audit: ISO, SOC 2, a pentest, a compliance report. It’s the mandatory floor.

Appearing secure is something else. It’s what clients see when they google your brand. What the media and social networks say. What shows up in search results when someone types your company name next to the word *”hack”*. The audit doesn’t measure this. The market does: clients who leave, contracts that don’t get signed, brand value that drops.

The trap: an organization can be 100% on the left side (technically protected) while simultaneously losing on the right side. Or the reverse: great at public relations but with the engine on fire inside.

Order matters, because this is not security theater: first be secure, then appear secure. Never the other way around. Appearing secure without being secure doesn’t survive the slightest scrutiny, let alone a real crisis. What I’m arguing is that being secure is no longer enough. You need to be able to demonstrate it at any moment, not just on the eve of the external audit.

Medium and low severity findings count too

For years, security teams have prioritized by severity: critical first, high next, medium and low almost always end up in the backlog. That made sense when the attacker needed to exploit the vulnerability to cause damage.

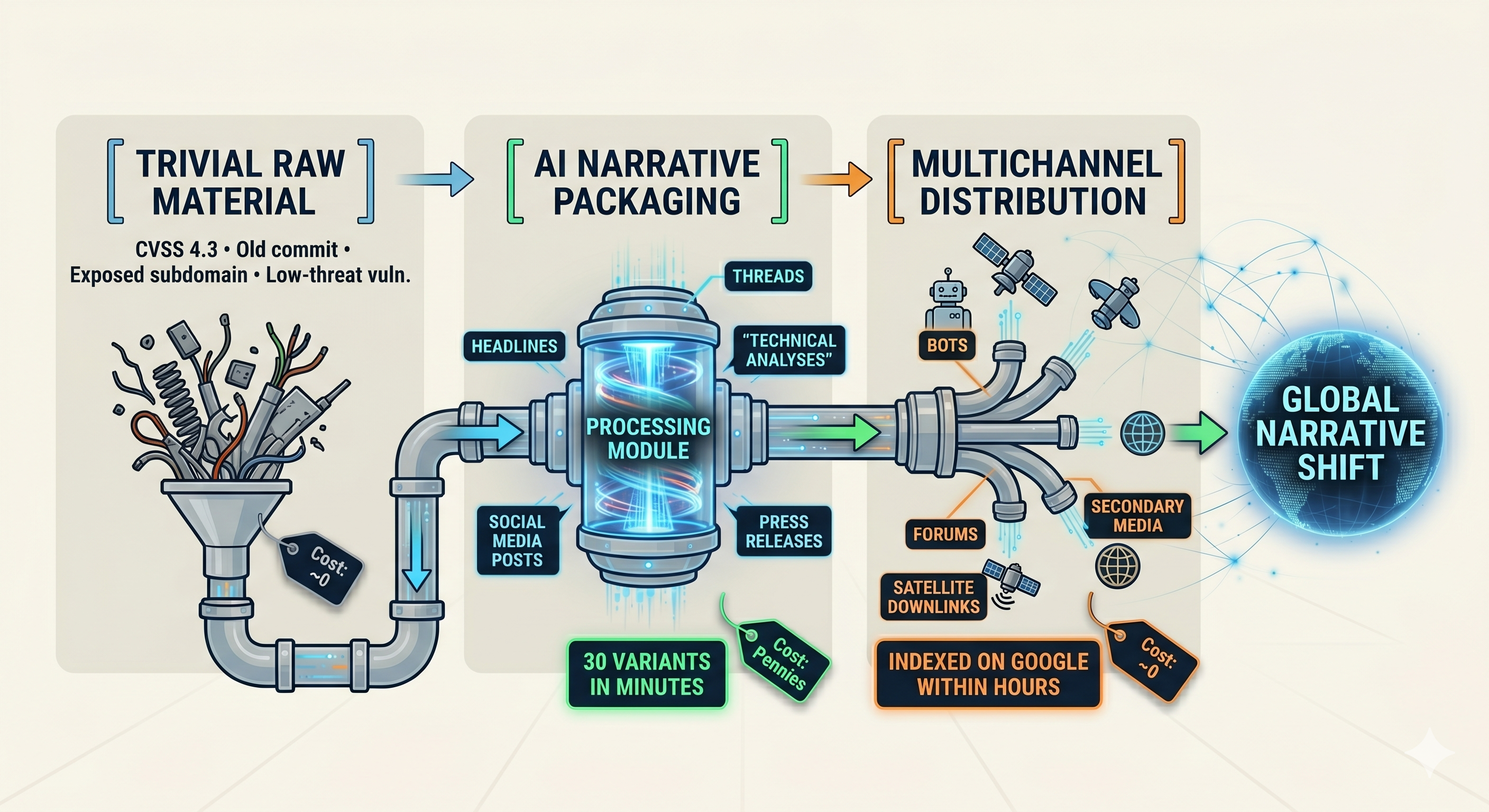

But the modern attacker doesn’t want to exploit. Sometimes they just want to show. And AI has radically changed what can be done with low-severity material.

The pipeline looks like this:

low technical severity + AI amplification = high perceived severity

low technical severity + AI amplification = high perceived severity

The result is the formula that closes this entire argument: low technical severity + AI amplification = high perceived severity. And perceived severity is what moves clients, stock prices, and regulators.

Damage without failure: three real cases

To avoid staying abstract, three cases from recent years. In all three, the claim was public, massive, and verifiably false. And in all three, the targeted brand had to defend itself anyway.

Case 1 — Europcar (January 2024): the paradigmatic case

An anonymous actor appeared in a hacking forum offering to sell the data of 48.6 million people allegedly stolen from Europcar, the European car rental company. A breach of that size would have been one of the largest of the year.

Europcar responded quickly and with technical precision: the published data “sample” was clearly fabricated. After internal verification and consultation with threat intelligence services, the company publicly confirmed the announcement was false.

But the narrative damage had already started. And here’s the quote that defines the moment, covered by Dark Reading: thanks to new tools leveraging artificial intelligence and machine learning, it’s easier than ever to falsify allegedly stolen data, leaving it up to humans to fact-check these claims and stop them from spreading.

This is the paradigmatic case of the new risk: a public crisis with no real incident behind it, built from synthetic data that looks authentic.

Case 2 — Colonial Pipeline (October 2023): when the attacker rides your previous history

In October 2023, a group appeared on Telegram claiming to have taken “full control” of Colonial Pipeline’s systems, with torrent links supposedly containing exfiltrated backups. Colonial confirmed hours later that the claims were unfounded.

But the trick is in the name. Colonial Pipeline had suffered a famously real breach in 2021 — the one that paralyzed fuel supply on the US East Coast and forced the government to declare an emergency. That 2021 coverage stuck in public memory. When someone searches *”Colonial Pipeline hacked”*, that story still appears on the first page.

The 2023 attacker leveraged exactly that. People remember *”Colonial Pipeline = hacked”* and don’t need to verify much more. Colonial had to re-deny something that, for many readers, was already true by historical inertia.

This adds a new dimension to the problem: previous victims are easier targets. If it happened to you once, the next claims seem more credible even if they’re false. The public narrative works in the attacker’s favor.

Case 3 — Dollar Tree (July 2025): when the data isn’t even yours

INC Ransom claimed to have compromised Dollar Tree, one of the largest discount retail chains in the United States. They published data samples.

Dollar Tree investigated and responded with an unexpected twist: the data was real, but it wasn’t theirs. It belonged to 99 Cents Only Stores, a competing discount chain that had filed for bankruptcy and closed the previous year. The attacker had confused (or wanted to confuse) similar brands in the same sector.

Dollar Tree had to defend itself against a breach that wasn’t even theirs. 99 Cents Only couldn’t defend itself (it no longer exists).

This adds yet another dimension to the problem: an attacker can damage your brand with data that isn’t even yours, simply because your brand is better known than the actual source. The asymmetry is brutal: Dollar Tree paid in time, media attention, and corporate communications to prove it was a mix-up. The same thing can happen to you tomorrow.

The pattern across all three cases

In all three, no technical failure. In all three, a public and massive claim. In all three, the victim was right in their rebuttal. And in all three, the narrative damage happened anyway: media coverage, client anxiety, social media noise, permanently indexed in search engines.

The defense here is not technical (their infrastructures were fine). The defense is communicational and fast: monitoring mentions in forums and dark web, technical capability to verify data authenticity within hours, a technical spokesperson available, a precise public statement, and a pre-approved narrative playbook. If that’s not ready, you lose that fight even if your infrastructure is perfect.

But what if you actually failed?

Up to here we talked about damage when there’s no real incident. But there’s another extreme of the problem, completely different, and worth naming with equal seriousness: when there was a real failure, and public accountability is legitimate.

The case that best illustrates this is RIBridges, Rhode Island’s social services system operated by Deloitte. In December 2024, something very different from the previous three cases happened. There are no unsupported claims or exaggerated narratives here. There is a real failure, and it deserves to be named with the gravity it carries.

- Access came through a non-production VPN that had connectivity to sensitive data. That’s already a design problem.

- CrowdStrike, contracted to investigate, found that the firewall had generated hundreds of alerts during the 18 days in which data was being exfiltrated. The alerts were not addressed.

- 657,000 people initially notified. And these aren’t just anyone: they are beneficiaries of social welfare programs, many people in vulnerable situations, with sensitive data exposed.

This is not a minor technical detail that the press amplified. It is poor management with proportional consequences. Any one of those three points alone is serious. All three together over 18 days is operational negligence — from a vendor whose entire value proposition is knowing how to manage exactly these kinds of systems.

That’s why the state’s governor called out Deloitte by name. It wasn’t media noise. It was legitimate public accountability.

And here’s what matters: no narrative playbook saves you. If you truly failed, the only thing that saves you is having done the work well before. And being able to prove it.

Two types of damage, two three scenarios

Reputational damage comes from two opposite directions. One attacks you when you didn’t fail (Europcar, Colonial, Dollar Tree). The other exposes you when you did fail (RIBridges). Those are the two vectors of the problem, and AI amplifies both.

But where the crisis finds you doesn’t depend only on the attacker. It also depends on your capabilities. And that’s where three distinct operational scenarios appear, not two. The missing one is the most common and the worst of all.

Scenario 1 — No failure, with the ability to prove it. The Europcar case. Fast communicational defense backed by technical verification. Narrative monitoring, communication playbook, technical spokespeople ready, crisis simulations, ability to verify data authenticity within hours.

Scenario 2 — With failure, with the ability to explain it. The RIBridges case. No narrative playbook is worth anything here; the only thing that counts is having done the work well before and being able to prove it. Logs, tickets, signed decisions, active controls, independent validation. When the governor calls, you’re not fighting a narrative: you’re answering for a real failure. And the difference between “you sound credible” and “you sound like you’re making excuses” is determined by the auditable evidence you built before.

Scenario 3 — Not knowing which scenario you’re in. The black hole.

The phone rings, the journalist asks, and the honest internal answer is neither *”it’s false”* nor *”it’s real”. It’s *we don’t know**. We don’t know because the affected system’s logs have a 7-day retention period and the alleged incident was two months ago. We don’t know because that asset wasn’t in the updated inventory. We don’t know because the telemetry never covered that piece. We don’t know because we never ran a threat hunting exercise for that type of attack. We don’t know because the capabilities to answer with certainty were never built.

And “we don’t know” is the worst position of the three. Worse than Europcar, where you can rebut with data. Worse even than RIBridges, where you can at least show you know the scope and are responding. In the void, the attacker’s headline wins by default. Any silence is interpreted as confirmation. Any evasion feeds the headline. Any attempt to buy time translates to *”the company is not responding”*.

But there’s an even more concrete cost, often underestimated in these conversations. When we can’t determine whether we were compromised or not, the default defensive decision tends to be pulling the lever: shutting down systems, isolating networks, suspending services until we can ensure the environment is clean. And that, in a modern organization, means stopping operations. It means sales lost by the hour, SLAs missed with clients, contracts at risk, entire teams unable to work. “Not knowing” stops being a communication problem and becomes a measurable loss on financial statements. And worse: the press now covering why you’re offline, clients receiving service interruption notifications, regulators starting to ask questions about your level of preparedness. Reputational damage multiplies, and regulatory liability becomes concrete. What started as a journalist’s call ends as an operational, financial, and compliance crisis all at once.

The public crisis doesn’t create the technical debt. It only exposes it, within hours, after years of accumulation. And that is the real exam most organizations haven’t sat for yet: could we today, if the call came now, answer with certainty what happened in our systems over a given period? If the honest answer is *”depends on the system”* or *”we’d have to look into it”*, the reputational problem is only a matter of time.

And why doesn’t this scenario appear in the headlines if it’s the most common? Because the organizations that go through it don’t come out and talk. They can’t. Some companies might also be in this scenario without knowing it yet, discovering it only when a journalist asks about an asset no one has mapped, a system whose logs expired, or an incident that left no trace. Some might respond to the call with prolonged silence while internally trying to reconstruct what happened — and that silence, in the era of social media and generative AI, is interpreted as confirmation. Some might end up paying out-of-court settlements or absorbing the reputational damage without fighting, simply because they have nothing to defend themselves with. And others might end up issuing generic statements that neither confirm nor deny, choosing to wait out the media cycle rather than defend a position they can’t sustain with data. None of those outcomes appears in ransomware reports or breach statistics, because they aren’t breaches — they are exposed capability failures. But the organization pays the cost just the same.

Why it works: the human brain

This doesn’t work because AI is magic. It works because our brains are wired for it to work. Negativity bias and the speed at which false news spreads are well documented (Cacioppo, Hanson; Vosoughi et al., MIT, Science 2018): the amygdala obsesses over the negative, the social reflex rewards the novel, and false news travels far faster than true news. An alarming headline about your brand, generated by AI, meets both conditions perfectly.

The attacker doesn’t need to deceive your organization. They exploit how the brain of whoever reads the news is wired.

The numbers confirm it

In case anyone still thinks this is futuristic discourse, some solid data from the past year:

- 82.6% of phishing emails analyzed already show signs of AI generation (KnowBe4 / SlashNext, 2025).

- 11 minutes mean time between detection of exposed data and the first automated exploitation attempt (compared to hours or days in the pre-AI era).

- USD 200M+ in losses reported from deepfake fraud in Q1 2025 alone in the United States (FinCEN, Wall Street Journal).

Other figures are circulating (“+1265% in phishing post-ChatGPT”, “+680% in cloned voice”) that come mostly from vendor reports with non-transparent methodology. I use them with caution and prefer to stick with those that have verifiable methods. But the pattern is clear even conservatively: AI didn’t invent the attack. It made it cheaper, scaled it, and accelerated it.

What if the story reaches a serious outlet?

This is the question that should scare us the most, and it applies to both types of damage. It’s 9 AM. A national newspaper (or a news agency) receives an email: screenshots, a “sample” file, a link to the dark forum post where someone claims to have a terabyte of your data. Or a piece of data leaked from a real incident. The journalist covers technology but isn’t a cybersecurity specialist. They can’t tell the difference between a real breach and a claim. They’re convinced they have a scoop. They want to publish today.

And here’s the qualitative shift. We used to see fake news in tabloids, not in serious outlets — because serious media had a human verification process that filtered out the most obvious fakes. In the AI era, seeing fake news presented as serious is no longer surprising. The reason? The journalist, however professional, simply doesn’t have the perceptual tools to distinguish a real video from one generated by AI. The same applies to cloned audio, fabricated screenshots, PDFs with fake letterheads. The defensive line that traditionally separated the serious outlet from the tabloid has shifted, and the attacker knows it.

Five things happen simultaneously:

- The outlet gives the rumor or fact institutional credibility.

- The journalist has no framework to distinguish a claim from a confirmed breach — nor tools to detect synthetic content.

- Screenshots, files, audio, and videos look like evidence.

- The editorial deadline runs faster than the legal deadline.

- Once published, other outlets replicate without verifying.

How many of you have a protocol today for that 9 AM call?

The checklist before communicating

Before talking to the journalist, before drafting the statement, before notifying clients, there’s one question you need to be able to answer with a yes. Do you have the evidence to back what you’re about to say?

And pay attention to the nuance: the checklist works for both types of damage. If it’s a Europcar-type case (you didn’t fail), the evidence lets you sustain your defense with verifiable data and prove the attacker’s “sample” is fake. If it’s a RIBridges-type case (you did fail), the evidence lets you show you know the scope, that you’re responding, and that you’re not improvising. In both cases, without evidence, any public response is an unsupported statement. And an unsupported statement is the best way to turn an incident into a crisis when the narrative starts contradicting the facts.

- Logs of the affected system, preserved and accessible.

- Management ticket with an assigned owner.

- Decision and approver traceable (who, when, why).

- Patch and control status at the time of the incident.

- Internal communications documented during the response.

- Independent validation (recent pentest, auditor, SOC).

If you can’t show evidence of how something was managed, for practical purposes it wasn’t managed. Good cybersecurity management isn’t declared: it’s evidenced.

Ignore it or manage it

AI-driven reputational risk is real, quantified, and growing. The question isn’t whether it affects us. It’s what we’re going to do about it. And there are only two possible answers.

Ignoring it means waiting for the first incident, assuming it won’t happen to us, reacting by improvising under pressure when the phone rings at 9 AM. Cost when it happens: incalculable.

Managing it means preparing auditable evidence today, building the playbook before you need it, training technical spokespeople, simulating crises. Cost of the investment: plannable.

Ignoring it is also a decision, even if the organization doesn’t make it consciously. Omission is a decision. The question is whether we want it to be explicit or by default.

Three fronts for Monday morning

If everything above reduces to something actionable Monday at 9 AM, it’s three fronts. In this order.

1. Auditable evidence, continuous and at hand.

Logs, tickets, patches, approved decisions with who and when, independent validation. Available at any time, not only on the eve of the external audit. When the call comes, the only thing that distinguishes a credible response from an improvised excuse is the evidence you built before. This is the foundation. Without this, everything else is decoration.

2. Train, train, and train.

Regular tabletop exercises for all three scenarios: the amplified false claim (Europcar type), the real exposed failure (RIBridges type), and (the most uncomfortable) the scenario where we can’t answer with certainty whether we failed or not. And not just the technical team — also communications, legal, and leadership. All four functions, together, in the simulation. Practice how the technical spokesperson sounds on the phone with a journalist at 2 AM. Practice drafting the statement in 90 minutes. Practice who decides what, without waiting for the committee. And practice what to do when we ourselves don’t know what happened. What isn’t trained, won’t be executed under pressure.

3. Rethink prioritization on exposed systems.

Criticality still leads — this isn’t “patch everything,” that’s unworkable and nobody’s going to do it. But medium and low severity vulnerabilities on internet-facing assets need to start moving up in the remediation queue. For two reasons that compound:

- Immediate reputational risk. They are the raw material an attacker needs to build an amplifiable half-truth. Without a minimal verifiable input, the attacker has to fabricate from scratch — and pure fabrication is easier to rebut. Closing the exposed mediums and lows removes ammunition from the hostile narrative before it’s even written.

- Growing operational risk. Time works for the attacker. That medium or low finding forgotten in the backlog tends to chain with others, get reweaponized, or accumulate until it becomes the vector for a real compromise. What today is CVSS 4.3 (information disclosure) tomorrow can be the first link in a chain that ends in RCE.

The investment is the same. The return is double: it protects against the hostile headline today and the technical compromise tomorrow.

I know this costs. Building continuous evidence capabilities, narrative monitoring, and cross-functional simulations isn’t free, and many security teams are fighting for a baseline budget, not a new one. But the cost of the first crisis without this is orders of magnitude greater — and that calculation, translated into brand value impact, lost contracts, board hours, and external legal advisory fees, is the conversation worth having with the CFO before the journalist’s call opens it for us.

What we need to rethink

And here’s the uncomfortable conclusion. If the above makes sense, then typical remediation timelines no longer serve us. Those SLAs were designed for an era where the attacker was exclusively technical and needed to exploit to cause damage.

The modern attacker doesn’t operate on those timelines. They put together a narrative campaign amplified by AI in hours. And while we wait for next quarter’s patch cycle, that same vulnerability can appear in a headline the day after tomorrow.

We need to rethink two things simultaneously:

- How we remediate. Processes, cycles, automation. Faster, with less operational friction.

- How we prioritize what’s exposed. A new factor in the matrix: public visibility of the asset. Not to replace CVSS, but to complement it.

A direction, not a closed recipe: for internet-facing assets with narratively useful raw material (public subdomains, accessible repositories, endpoints returning informative errors, visible third-party integrations) — remediation windows should be measured in days, not months, even when the CVSS is low. What breaks this isn’t the technical severity of the bug. It’s the combination of public exposure plus plausible narrative input. And that combination defines a class of risk that traditional matrices don’t see.

The threats ahead are no longer only technical. They are technical, narrative, social, and perceptual — all at once. If we keep measuring risk with a ten-year-old ruler, we’ll be late to the next crisis. And the next headline doesn’t wait for next month’s sprint.

How many times have you seen in your organizations the phrase *”if it’s not critical, we leave it in the backlog”* become the foundation of a public crisis months later?